Data Labeling refers to the process of identifying unorganized and raw data in formats including images, text files, videos, etc.) and adding one or more relevant labels/tags to every data element. The labels provide information to help machine learning models learn the context of every data element. When the Machine Learning models and algorithms learn what each data signifies, they make predictions based on the information gathered. The labels must specify what each data signifies and is all about so that Machine Learning models and algorithms can make necessary predictions. The purpose of data labeling is to convey basic information that helps identify a specific data element.

Machine Learning technology has the capability to trace and identify patterns automatically, simply by running specific learning algorithms for relevant labeled datasets. ML actually stimulates the human-decision making process to make it better and more accurate.

Automated Data Labeling

Automated Data Labeling is the process where the machine algorithm itself annotates your data based on your input. This is faster method of Data Annotation, however, this needs to be configured correctly, otherwise it may lead into wrong data being annotated.

Pre-annotating a part of or an entire dataset

Human expertise will always be needed to review and correct the results from automated labeling, to complete the annotation process. Not everything can be annotated by automation, not every time automation can get all annotations right. There will be exceptions and miscalculations.

Reducing burden on the human side

Manual reviewing is necessary no matter how advanced the automated system is. But, an automated data labeling model can annex a level of confidence as per the use case, degree of complexity, and related factors. Once the automated system annotates all the datasets, the annotations that derive lower confidence scores are sent to manual expertise for review.

Data Labeling Techniques in Machine Learning

Semi-Supervised Learning

Semi-supervised learning refers to the standard practice of incorporating both supervised and unsupervised data to label large volumes of data with a comparatively smaller set of labeled data. The supervised learning models in ML are trained on the smaller set of labeled data to predict labels of datasets that have not been labeled yet or to assign unlabeled datasets with proxy labels. When the proxy labels meet the set criteria, they are added to the training dataset along with the model that the updated dataset has trained again. This entire process repeats itself until the system achieves the expected model accuracy.

Transfer Learning

In the transfer learning technique of data labeling in Machine Learning, a pre-trained ML module labels the data. In this method, the system usually uses a model that has been trained on a dataset that is similar to or resonates with the dataset you want to label. The data sets are fine tuned until the desired accuracy is ascertained by the system.

Reinforcement Learning

The reinforcement learning method in Machine Learning works in line with the trial-and-error approach to make accurate data-backed predictions, resolve classification imparities, and address regression.

Supervised Learning

The Supervised Learning method is all about managing a large volume of manually labeled datasets, where the model compares the labeled data with the freshly acquired data to spot errors and gaps. The model learns to predict future possibilities of fraudulent acts pertaining to data.

Unsupervised Learning

Unsupervised Learning deals with raw data with the goal to find a suitable structure and organize unstructured data into data clusters. Unsupervised Learning is most commonly useful in managing transactional data that includes tasks such as tracking customer preferences, identifying customer segments, and more.

Most Common Types of Data Labeling

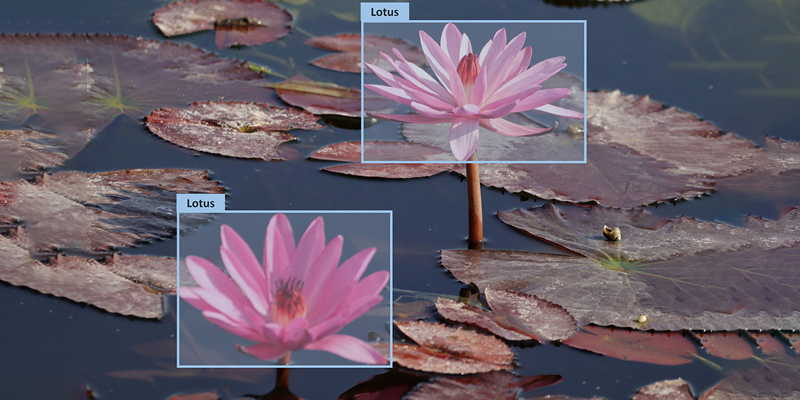

Computer Vision

Label images, pixels, and other key points are required at hand before you start building a computer vision system. The other way to begin is to set a bounding box, which is a border to enclose the digital image completely, with the purpose of generating your dataset for training. Example scenarios would include classifying images by quality or resolution, classifying images as per the content within the images, and more.

Natural Language Processing

Natural Language processing begins with manually identifying the important part of the text or with tagging the text with relevant labels to generate your dataset for training. Example scenarios could include identifying parts of speech in a piece of content, or identifying a clutter of proper nouns or even reading text from images, PDFs, and other file formats.

Audio Processing

Audio Processing begins with manually transcribing the audio content into written text. The next step would be to look for deeper information and insights about the audio by adding tags and categorizing the audio data, while the categorized audio now becomes the dataset.

Natural language generation

Natural Language Generation is a subset of Natural Language processing that enables computers to write by producing a human language text response based on relevant data inputs. The text can be converted into a speech format as well.

Data Labeling Best Practices

> Interactive, smart, & comprehensive task interfaces to reduce load of data recognition & switching context of labeling

> A streamlined and reliable labeler consensus to reduce risks of errors by individual data annotators

> In-depth label auditing to determine label accuracy and modify/update labels as and where necessary

> Active learning to identify the most useful datasets labeled by humans from among the lot.

Conclusion

AIW provides you with advanced and cost-effective Data Labeling & Data Annotation Services, ensuring highly accurate and timely human-in-the-loop labeled and annotated data across various domains and industry verticals. AIW is designed to help AI-enabled businesses achieve and ensure superior training of AI and ML models.